AI has moved from controlled pilots to everyday work faster than most enterprise governance models were built to handle. What began as experimentation is now embedded across content creation, analytics, customer interactions, development workflows, and decision support. AI dominates 2026 transformation budgets, commanding more executive attention and budget than security or customer experience.

According to the ROI of Enterprise Digital Transformation report, 75% of leaders now rank AI as their top technology priority. However, many organizations are discovering that investment alone does not translate into business outcomes.

The core risk holding enterprises back is not AI, but invisible AI usage. When user adoption unfolds unevenly across roles, teams, and workflows, leaders lose visibility into how AI is actually used, where value is created, and where risk accumulates.

This invisibility compounds a real challenge. Large organizations already struggle to quantify digital transformation ROI, and AI makes that harder. 61% of leaders still rely primarily on qualitative feedback to measure success. Without AI adoption and usage analytics, progress feels subjective, reinvestment decisions lack discipline, and friction that slows outcomes goes unnoticed. Over time, this gap in measurement separates AI programs that scale from those that stall.

Tracking AI usage is a value-realization and risk-management discipline that reveals how it’s adopted within real workflows. Without it, organizations cannot scale, govern, or optimize AI investments.

Why AI Usage Tracking Is Essential

AI usage tracking is not about counting logins or monitoring individual activity. It is about understanding whether employees are using the right AI tools, applying them effectively through strong prompts, and integrating AI into their workflows in ways that genuinely accelerate work.

Without this visibility, leaders are forced to rely on assumptions. License data may confirm access, but it cannot show whether AI is improving productivity, where adoption is stalling, or which teams are creating real value. Usage tracking fills this gap by revealing how AI is used inside real tasks, not just whether it is available.

This insight transforms AI from a sunk cost into a measurable investment. Organizations can justify spending based on actual adoption and outcomes, identify power users and high-performing teams, and uncover friction points that slow scale. Most importantly, it enables targeted, in-context support for employees who need guidance, rather than generic training or reactive enforcement. When AI usage is visible, organizations can enable user adoption with precision and scale it with confidence.

How AI Usage Tracking Enables AI Governance

AI governance fails when AI usage is invisible. Most enterprises rely on policies, approvals, and licenses to control AI, yet these mechanisms do not reflect how AI is actually used in day-to-day work. Access does not equal adherence, and policy intent often breaks down inside real workflows. You cannot govern or scale AI you cannot see.

Why AI usage visibility matters:

- Bridges the gap between AI policy and real behavior: Policies and licenses show access, not application. Usage visibility reveals how AI is used inside workflows and whether it aligns with governance intent.

- Exposes hidden risk before it becomes an incident: Without visibility, shadow AI, risky prompts, and data exposure emerge unnoticed. With it, issues surface early.

- Enables governance at the workflow level: CIOs can oversee how AI is embedded into real tasks, where risk and value actually live.

- Enables early intervention and course correction: Risky or ineffective usage patterns can be addressed through guidance and controls before escalation.

Key Types of AI Adoption Metrics

Measuring AI success requires more than tracking access or license utilization. Effective AI adoption metrics connect usage to workflows and workflows to outcomes. Together, these three layers give leaders a clear picture of whether AI is being adopted, how it is used, and what impact it delivers.

Usage Metrics

Usage metrics answer the foundational question: who is using AI, and how consistently? These metrics help organizations understand adoption breadth and depth across the enterprise, while revealing where additional enablement is needed. Usage metrics include:

- Adoption rate by role, department, and seniority: Shows where AI is gaining traction and where adoption is uneven or stalled.

- Daily active user rate: Indicates whether AI is part of everyday work or limited to occasional use.

- Engagement depth: Measures how extensively users interact with AI features, beyond surface-level experimentation.

- AI onboarding completion rate: Reveals whether employees are being properly enabled to use AI effectively from the start.

Workflow Metrics

Workflow metrics move beyond usage to show how AI is embedded into real work. These metrics help leaders understand whether AI is improving execution speed, reducing manual effort, and reshaping how work gets done. Workflow metrics include:

- Total number of AI-enabled workflows: Indicates how deeply AI is integrated across business processes.

- Task completion time: Measures whether AI is accelerating specific activities.

- Cycle time reduction: Shows impact at the process level, not just individual tasks.

- Workflow automation percentage: Highlights the extent to which AI reduces repetitive or manual steps.

Outcome-Based Metrics

Outcome-based metrics connect AI usage directly to business value.

- Productivity improvements: Measures output gains at the individual, team, or function level.

- Operational efficiency improvements: Tracks cost reduction, throughput increases, or resource optimization driven by AI.

- Team- and role-specific performance metrics: Ensures AI impact is measured in ways that reflect how different teams create value.

Why Do Enterprises Struggle to Measure AI Usage?

Traditional approaches to measuring AI usage are not designed to capture how AI is adopted in real work. As a result, leaders are left with partial signals that obscure behavior, context, and impact. Here are some reasons why enterprises struggle to measure AI usage.

- AI usage is fragmented across tools, teams, and workflows: Employees use AI through multiple platforms and embedded features, creating disconnected data and inconsistent visibility.

- Visibility is limited to access, not behavior: License and permission data show who can use AI, not how AI is actually applied in day-to-day work.

- Usage means contextual application, not simply “using” a tool: AI adoption depends on timing, intent, and workflow relevance, which binary usage metrics fail to capture.

- Monitoring and security tools don’t show how AI is actually used: These systems detect activity and risk, but they do not reveal effectiveness, friction, or value creation.

- Training and policies don’t change behavior inside workflows: Without in-app guidance and reinforcement, employees revert to familiar patterns and AI adoption stalls.

How Whatfix Enables Enterprise AI Adoption and Usage Tracking

AI adoption does not fail because models are unavailable or tools are underpowered. It fails when employees are expected to change how they work without the confidence, context, or support to do so. Governance and usage tracking alone cannot drive value unless they are paired with enablement inside real workflows.

Whatfix approaches AI adoption as a people-centric transformation problem. By combining risk-free simulation training, in-app guidance, and behavior-level analytics, Whatfix enables organizations to see how AI is used, where users struggle, and how to intervene with precision. This allows enterprises to govern AI at the workflow level while accelerating adoption, proficiency, and ROI.

1. Pre-Deployment Training in a Risk-Free Sandbox

AI adoption begins before go-live. Asking employees to use AI in live systems without prior practice introduces risk, hesitation, and inconsistent behavior. Confidence must be built before AI becomes part of daily work.

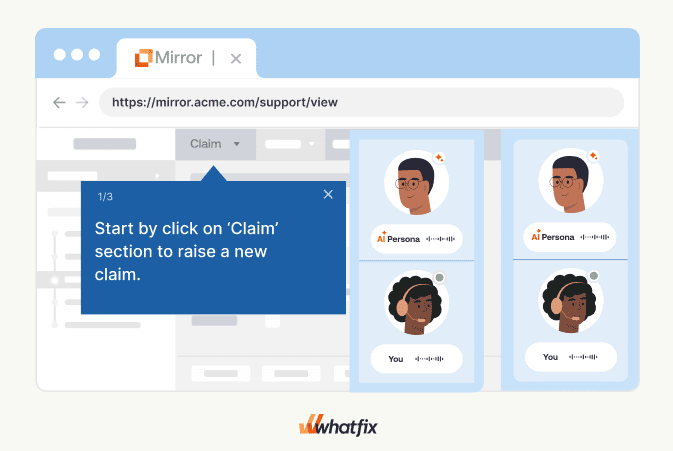

Whatfix enables organizations to train users in safe, simulated environments with Whatfix Mirror, that replicates real AI-powered workflows. Employees can practice using AI features, test prompts, and explore use cases without touching production data or impacting live operations. This removes the fear of mistakes while reinforcing approved usage patterns from the start.

By validating prompts, workflows, and AI-assisted tasks ahead of rollout, organizations reduce downstream risk and variability. Users enter production environments with clarity on how AI fits into their role, what “good” usage looks like, and how to apply AI effectively. The result is higher readiness at launch, faster time-to-value, and cleaner usage data once AI is deployed at scale.

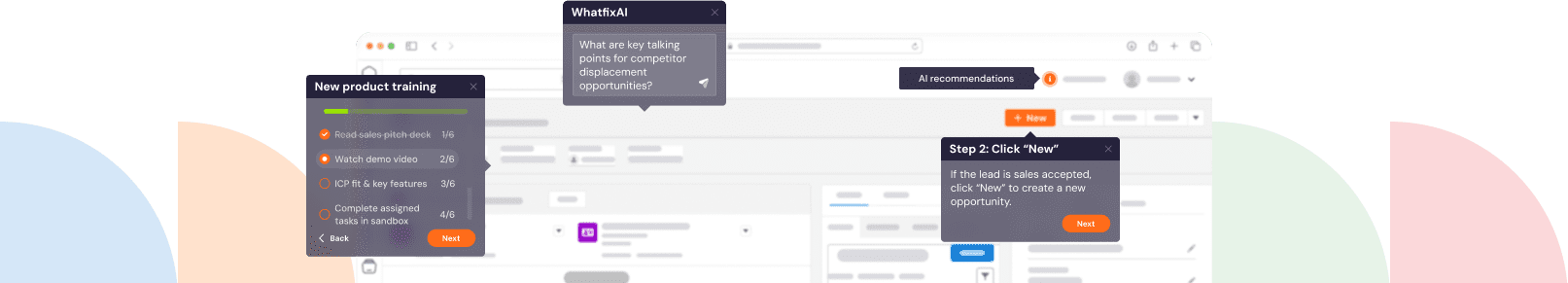

2. Role-Based Onboarding & Training

Generic AI training fails because AI is not used generically. Different roles interact with AI in different ways, within different workflows, and for different outcomes. Yet many organizations still rely on one-size-fits-all enablement that explains what AI can do without showing how it applies to specific jobs. This creates a familiar gap. AI may be available, but it does not feel usable in the context of day-to-day work.

Whatfix closes this gap by delivering role-based training directly inside the applications where AI is used. Guidance is tailored to how specific teams and functions apply AI within their workflows, not abstract use cases. Employees are shown exactly when to use AI, how to invoke it, and what success looks like for their role.

Step-by-step, in-app guidance ensures that learning happens in context, at the moment of need. Users do not have to rely on memory, documentation, or external training sessions. As a result, employees reach proficiency faster, AI becomes part of normal execution, and organizations see quicker time-to-value from their AI investments.

3. Embedded, In-Context Support for Everyday AI Use

Even after onboarding, many employees struggle to use AI effectively. Without guidance at the moment of use, employees either underuse AI, rely on it incorrectly, or abandon it altogether.

The absence of in-the-moment support slows adoption and introduces risk. Users experiment in isolation, copy prompts from unverified sources, or apply AI inconsistently across similar tasks. Over time, this leads to uneven results, declining confidence, and increased misuse that policies alone cannot prevent.

Whatfix addresses this gap with embedded, self-service support delivered directly inside applications. Employees gain instant access to approved prompt examples, AI best practices, and contextual guidance while they work. Support appears when and where it is needed, reinforcing correct usage without interrupting productivity.

By reducing dependence on IT and support teams, Whatfix enables employees to resolve questions independently while maintaining consistency and compliance. The result is higher confidence, more effective AI usage, and sustained adoption that scales across teams and workflows.

4. On-Demand Change Enablement

AI evolves faster than most organizations can retrain their workforce. New features, updated models, and revised governance policies are introduced regularly, yet users often miss these changes or discover them too late. When communication happens through emails, documents, or meetings, important updates rarely translate into changed behavior.

This disconnect fuels change fatigue. Employees are asked to adapt repeatedly, without clear guidance on what has changed, why it matters, or how it affects their daily work. Over time, adoption stalls and governance weakens as users default to familiar patterns.

Whatfix enables on-demand change communication directly inside workflows. AI feature launches, updates, and governance reminders are delivered in-app, at the moment they are relevant. Instead of asking users to remember new rules or capabilities, Whatfix reinforces them contextually as work happens.

By embedding guidance where execution occurs, organizations ensure that AI usage stays aligned with evolving policies and transformation goals. Change becomes continuous, not disruptive, helping enterprises sustain AI adoption while reducing fatigue across teams.

5. Nudging Users Toward Higher-Value AI Use Cases

Most employees begin their AI journey at the surface level. They use AI for simple tasks like drafting text, summarizing information, or answering basic questions. While useful, this level of adoption rarely delivers the productivity gains leaders expect from enterprise AI investments.

Without intentional guidance, AI usage plateaus. Employees are unaware of advanced capabilities, unsure how AI applies beyond basic tasks, or hesitant to change established workflows. Over time, this stagnation limits ROI and leaves much of AI’s potential unrealized.

Whatfix helps organizations move beyond basic usage by nudging users toward higher-value AI use cases. Behavioral nudges are delivered based on role, workflow, and actual usage patterns, encouraging employees to explore advanced or underused AI features when they are most relevant.

These nudges are not generic prompts. They are contextual, timely, and aligned to how different teams work. By guiding users toward deeper, more impactful AI usage, Whatfix enables organizations to unlock sustained productivity gains and continuously increase the value of their AI investments.

6. AI Usage Analytics, Governance, and Continuous Optimization

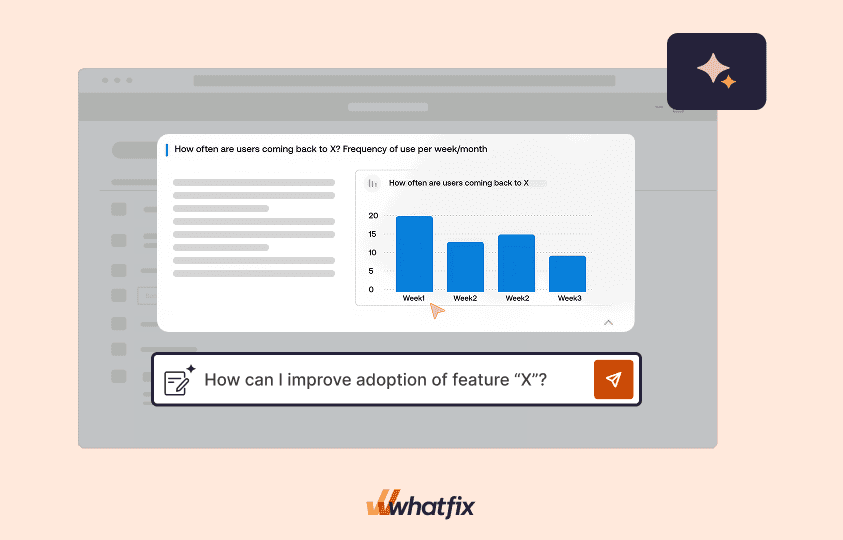

AI ROI and governance break down when organizations lack visibility into how AI is actually used. Login data and license metrics may show adoption at a surface level, but they offer no insight into behavior, effectiveness, or risk inside real workflows. Without this visibility, leaders cannot distinguish between meaningful usage and stalled adoption, or between compliant behavior and emerging risk.

Whatfix closes this gap by tracking AI usage at the workflow and feature level. Instead of measuring access, organizations gain insight into how AI supports tasks, where users disengage, and which interactions introduce friction or risk. This allows leaders to move beyond assumptions and base decisions on real usage patterns.

These insights power continuous optimization. When drop-offs, inefficiencies, or risky behaviors are detected, Whatfix enables targeted interventions through in-app guidance, nudges, and contextual support. Enablement evolves alongside usage, ensuring AI adoption improves over time rather than degrading after rollout.

By combining usage analytics with in-the-flow enablement, Whatfix transforms AI governance from a static control function into a dynamic system of learning and improvement. Organizations gain the visibility needed to govern responsibly, optimize continuously, and maximize the long-term value of their AI investments.

From Tracking AI Usage to Scaling AI Impact

AI success is not determined by how many tools are deployed or how quickly licenses are assigned. It is defined by how effectively people use AI inside real workflows, every day. Without visibility into usage, adoption stalls, governance weakens, and ROI remains elusive. With the right enablement and insights, AI becomes a scalable, governable capability that drives measurable outcomes.

Whatfix helps organizations move from invisible AI usage to confident, responsible, and high-impact adoption. By combining simulation-based training, in-the-flow guidance, and workflow-level analytics, Whatfix enables enterprises to govern AI without slowing innovation and scale usage without increasing risk. If you are ready to turn AI investment into sustained business value, book a demo with us today to see how Whatfix can help.