In corporate learning and development, the effectiveness of training programs stands as a critical determinant of organizational growth and success. This is where training evaluation models come into play, offering structured frameworks to assess the impact, efficiency, and value of training initiatives.

Training evaluation models provide invaluable insights into how well training resonates with learners, influences behavior change, and aligns with business objectives. In this article, we provide an overview of popular training evaluation models, explore their benefits, share real-world applications, and break down best practices for implementing each.

What Are Training Evaluation Models?

Training evaluation models are systematic frameworks designed to assess the effectiveness, efficiency, and outcomes of training programs within organizations. These models provide a structured approach to measuring the impact of learning and development strategies on learners, as well as their alignment with organizational goals. They help organizations gather data, analyze results, and make informed decisions to optimize training strategies.

Training evaluation models consist of various levels or stages that guide the evaluation process, ranging from assessing participant reactions and learning outcomes to measuring behavior change and business impact. Evaluation models offer valuable frameworks for gauging the effectiveness of training efforts, facilitating continuous improvement, and demonstrating the value of learning initiatives to stakeholders.

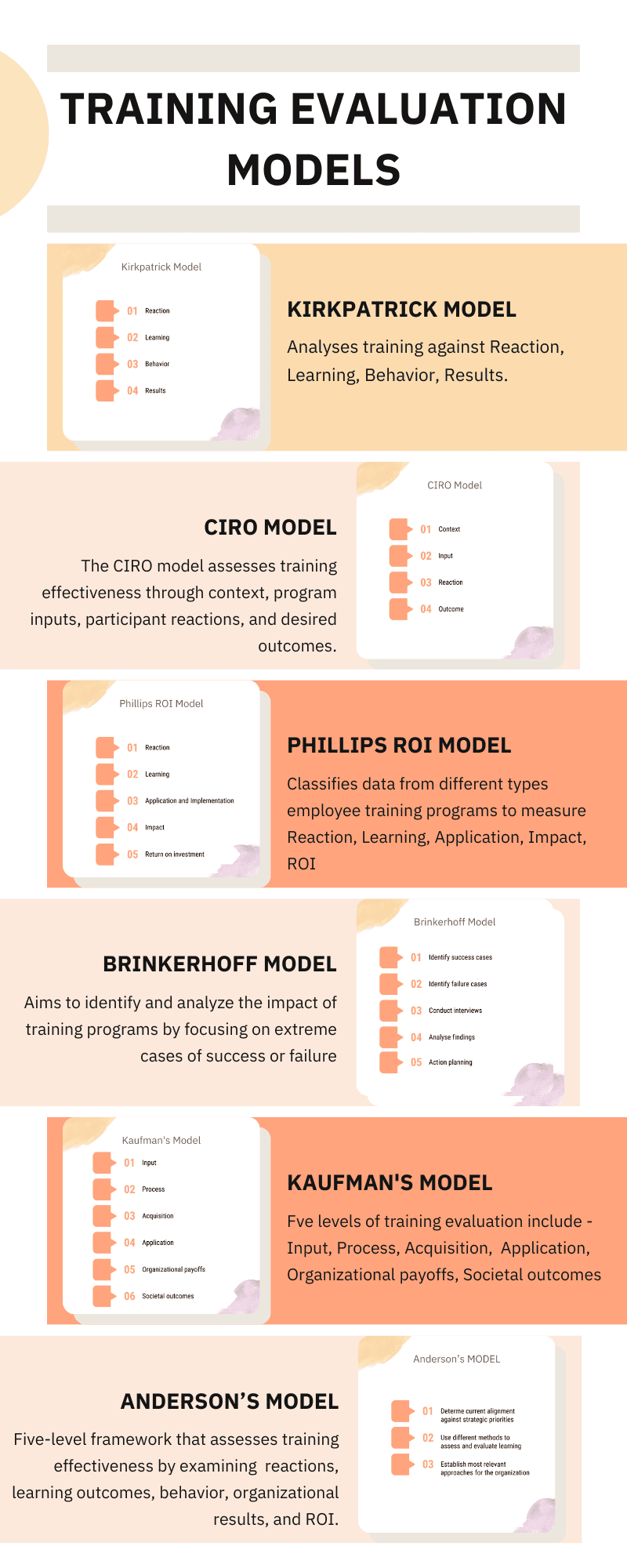

6 Best Training Evaluation Models

Here are six of the most common training evaluation models.

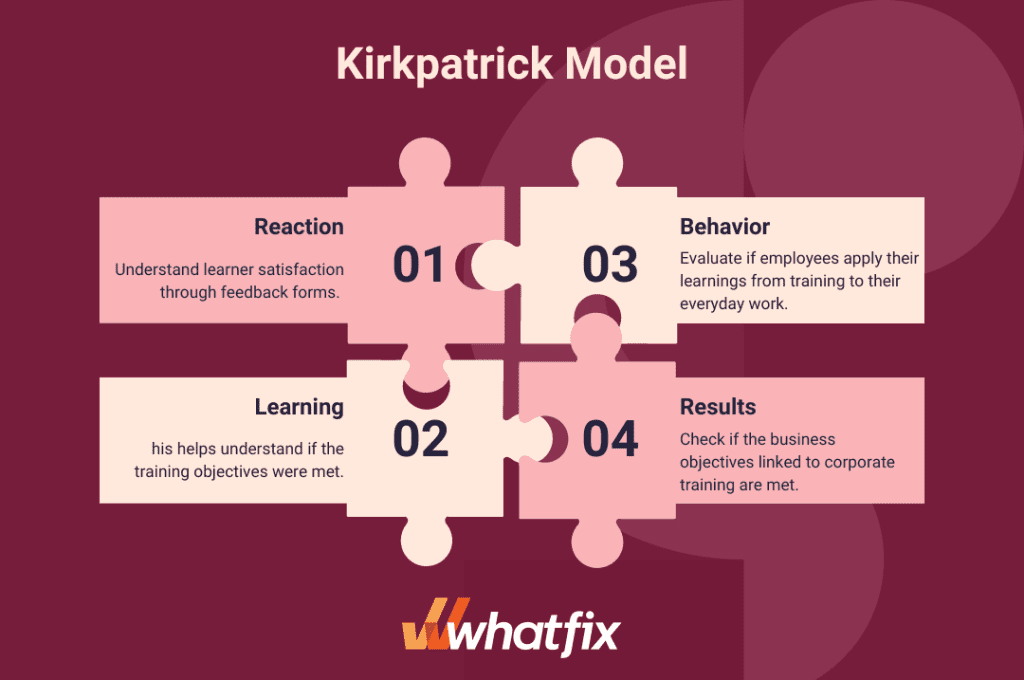

1. Kirkpatrick Model

The Kirkpatrick Model of training evaluation is a well known L&D evaluation model fpr analyzing the effectiveness and results of employee training programs. It takes into account the style of training, both informal and formal, and rates them against four levels of criteria, including:

- Reaction: Understand learner satisfaction through feedback forms.

- Learning: Gauge the understanding of a topic and degree of skill development by taking pre and post-test measures and hands-on assignments. This helps understand if the training objectives were met.

- Behavior: Evaluate if employees apply their learnings from training to their everyday work.

- Results: Check if the business objectives (such as greater productivity and fewer errors) linked to corporate training are met.

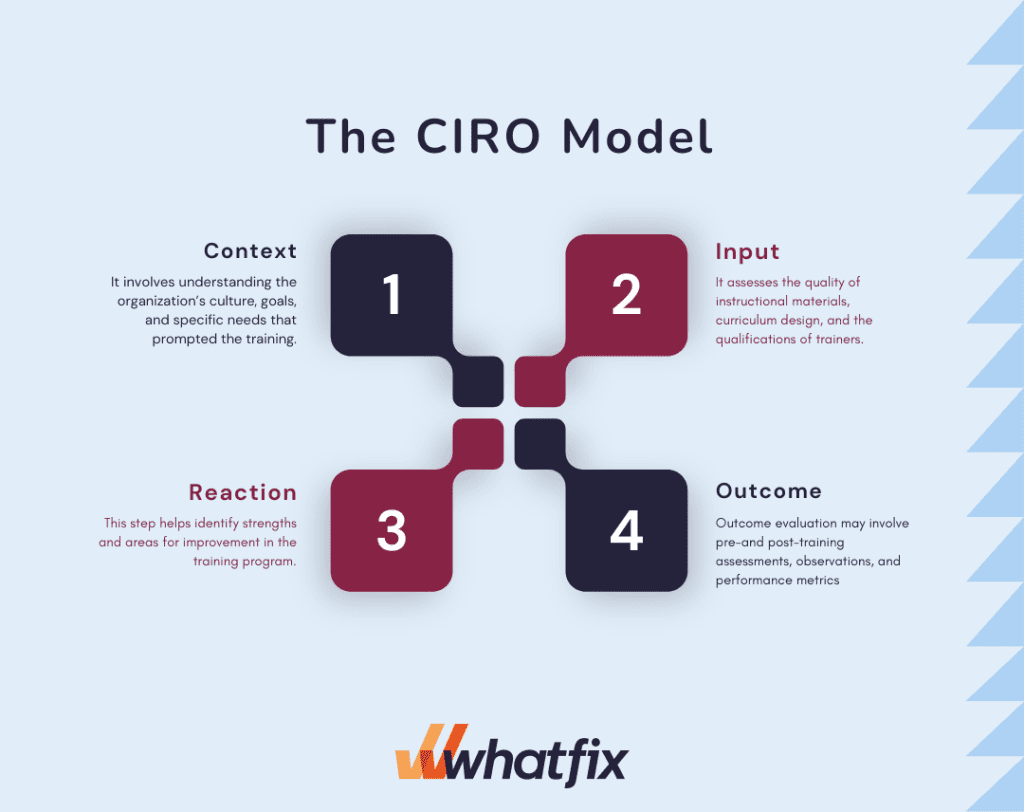

2. The CIRO Model

The CIRO model of training evaluation, developed by Peter Warr, Michael Bird, and Neil Rackham, stands for Context, Input, Reaction, and Outcome. This comprehensive framework offers a holistic approach to assessing the effectiveness of training programs. Here’s a breakdown of each component:

- Context: Context refers to the organizational and environmental factors that influence training effectiveness. It involves understanding the organization’s culture, goals, and specific needs that prompted the training. Context evaluation ensures that the training aligns with the larger organizational strategy and addresses real business challenges.

- Input: Input evaluation focuses on the design and content of the training program. It assesses the quality of instructional materials, curriculum design, and the qualifications of trainers. Input evaluation aims to determine whether the training materials are relevant, engaging, and conducive to effective learning.

- Reaction: Reaction evaluation gauges participants’ immediate responses and satisfaction with the training. It involves collecting feedback through surveys, questionnaires, or discussions to measure participants’ perceptions of the training’s relevance, clarity, and overall quality. This step helps identify strengths and areas for improvement in the training program.

- Outcome: Outcome evaluation assesses the actual impact of the training on participants and the organization. It examines whether the training led to desired behavior changes and improvements in job performance. Outcome evaluation may involve pre-and post-training assessments, observations, and performance metrics to determine the extent to which the training achieved its intended goals.

The CIRO model emphasizes a comprehensive view of training evaluation by considering the broader context, the quality of training materials, participants’ reactions, and the tangible outcomes of the training program. This approach ensures that training efforts are not only well-designed and engaging but also effectively contribute to improved performance and organizational success.

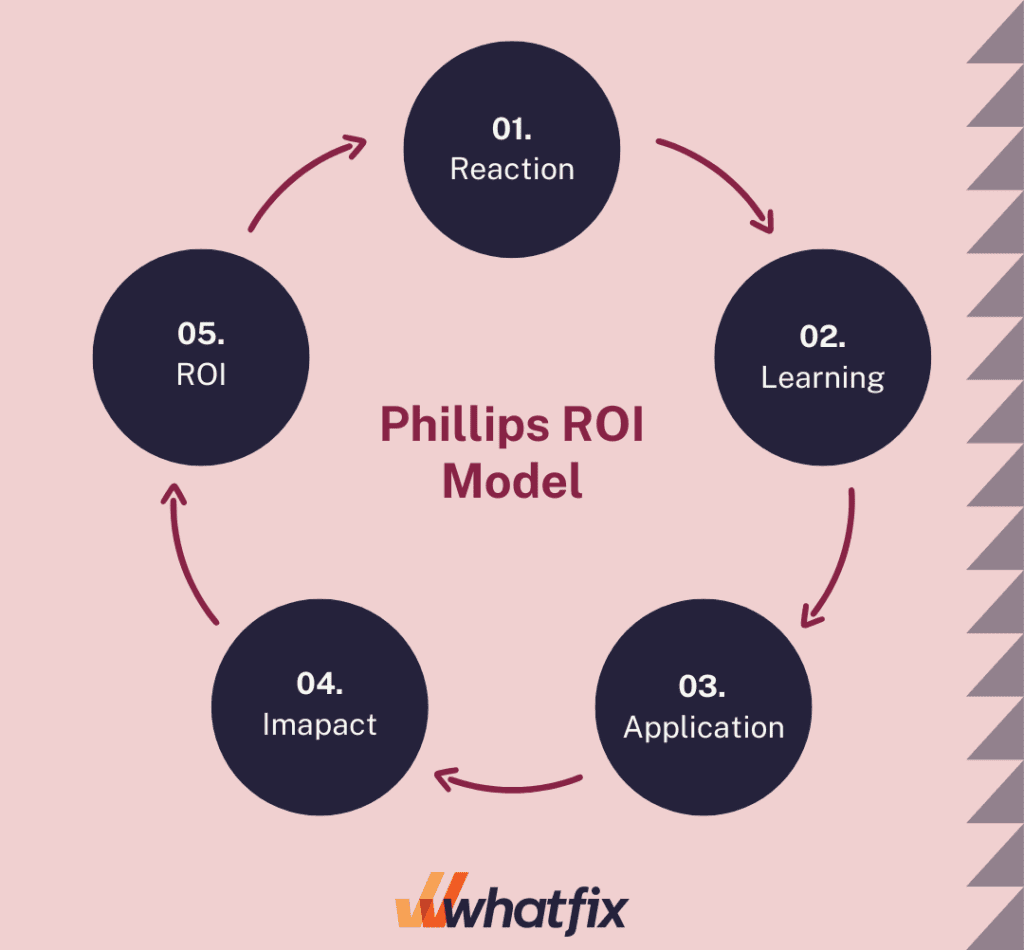

3. The Phillips ROI Model

The Phillips ROI Model is a methodology that ties the costs of training programs with the actual results. It builds on the Kirkpatrick Model and classifies data from different types employee training programs to measure:

- Reaction: Training managers use short surveys to gather data about participants’ responses to their training.

- Learning: Participants complete an MCQ survey or quiz both before and after the training for training managers to determine how much knowledge has been acquired.

- Application and implementation: The Phillips model doesn’t only collect data to find if the training worked or not; it also evaluates the WHY behind the success/failure of the training. It adds qualitative feedback to the data process to help organizations improve their training programs.

- Impact: The model lets you analyze the impact of training content and other factors that contribute to participants’ final performance.

- Return on investment: Uses cost-benefit analysis to map impact data to tangible monetary benefits and a set of intangible benefits. Training managers can use this data as hard evidence to prove the value of training to the authorities.

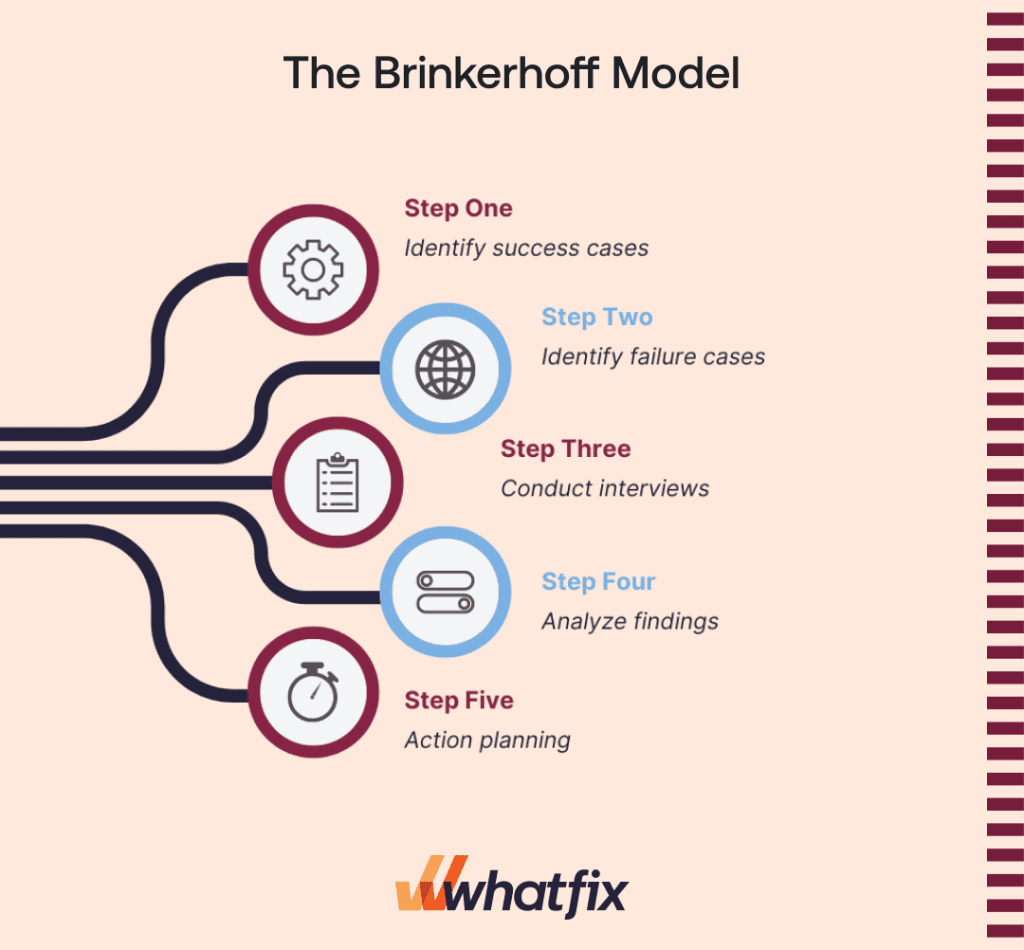

4. The Brinkerhoff Model

Brinkerhoff’s success case method is a comprehensive training evaluation model developed by Robert O. Brinkerhoff. It aims to identify and analyze the impact of training programs by focusing on extreme cases of success or failure.

Here are the key steps involved in implementing Brinkerhoff’s success case method:

- Identify success cases: Identify individuals or groups who have demonstrated exceptional performance as a result of the training. These success cases represent the positive outcomes that can be directly attributed to the training program.

- Identify failure cases: Identify individuals or groups who have not achieved the desired performance improvements despite participating in the training. These failure cases represent the potential barriers or limitations of the training program.

- Conduct interviews: Conduct in-depth interviews with both success cases and failure cases. These interviews aim to gather detailed information about learners’ experiences with the training program, including what aspects of the training worked well and what challenges they faced in applying the learning.

- Analyze findings: Analyze the data collected from the interviews to identify common themes, patterns, and trends. This analysis helps determine the critical success factors as well as the factors that hindered the training effectiveness.

- Action planning: Develop action plans based on feedback and recommendations to enhance the training program. These plans may involve refining the content, delivery methods, and support systems or addressing specific challenges faced by participants.

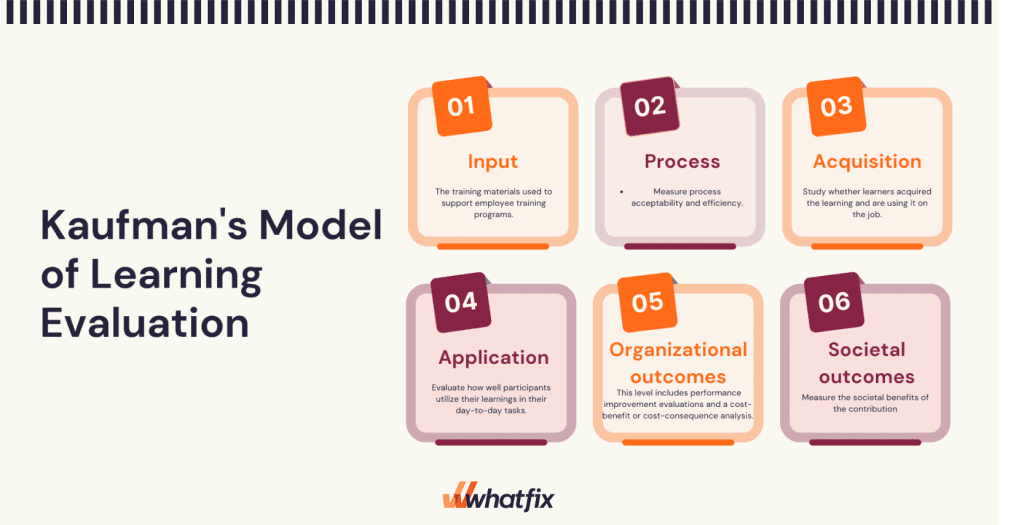

5. Kaufman’s Model of Learning Evaluation

Kaufman’s model is another model built on the foundation of the Kirkpatrick Model. It is a response or reaction to Kirkpatrick’s model that aims to improve upon it in various ways. Kaufman’s five levels of training evaluation include:

- Input: The training materials used to support employee training programs.

- Process: Measure process acceptability and efficiency.

- Acquisition: Study whether learners acquired the learning and are using it on the job.

- Application: Evaluate how well participants utilize their learnings in their day-to-day tasks.

- Organizational outcomes: Measure payoffs for the organization as a whole. This level includes performance improvement evaluations and a cost-benefit or cost-consequence analysis.

- Societal outcomes – Measure the societal benefits of the contribution as a whole from and to the client and society’s evaluation.

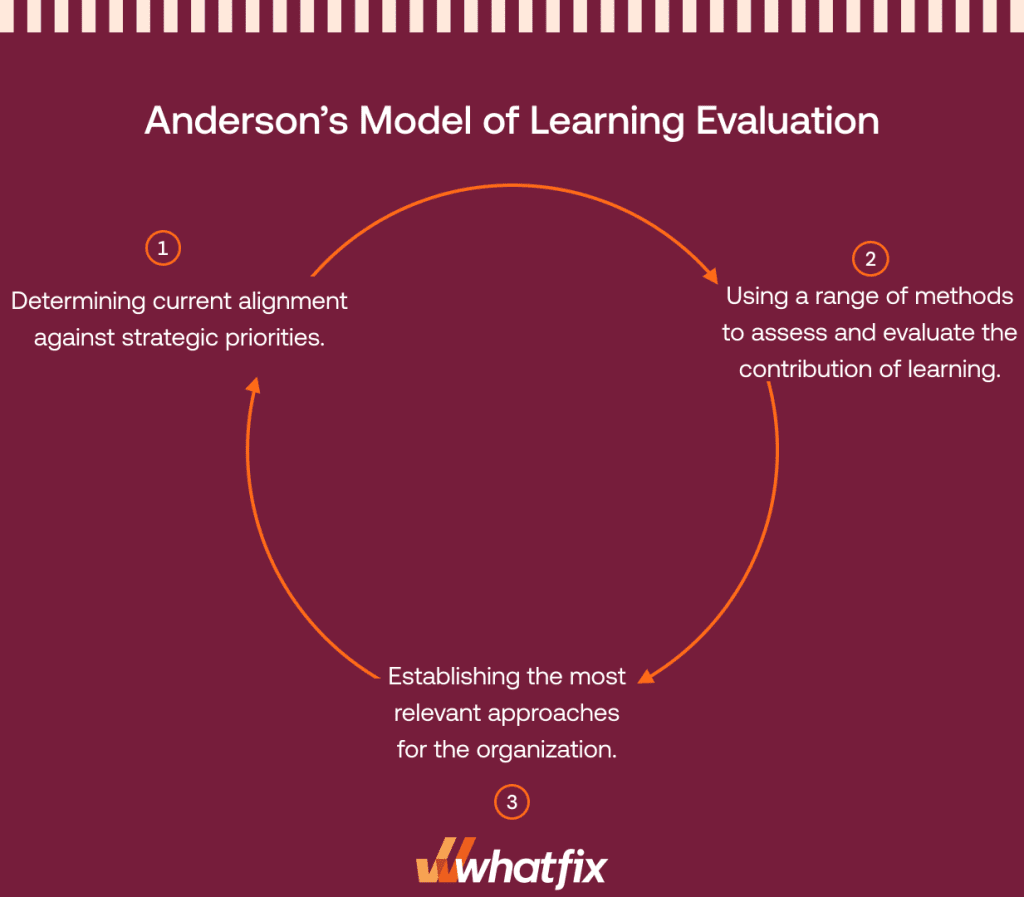

6. Anderson’s Model of Learning Evaluation

The Anderson learning evaluation model is a three-stage evaluation cycle applied at an organizational level to measure training effectiveness. The model is more concerned with aligning the training goals with the organization’s strategic objectives.

The three cycles of Anderson’s Model include:

- Determining current alignment against strategic priorities.

- Using a range of methods to assess and evaluate the contribution of learning.

- Establishing the most relevant approaches for the organization.

Benefits of Using Training Evaluation Models

Training evaluation models offer a range of benefits that contribute to the overall effectiveness and impact of training initiatives within organizations.

1. Measure training effectiveness

Training evaluation models provide structured methodologies to measure training effectiveness. By assessing participant reactions, learning outcomes, behavior changes, and organizational impact, these models offer a comprehensive view of how well the training aligns with its objectives and contributes to performance improvement.

2. Identify strengths and weaknesses

Evaluation models help L&D teams identify both strengths and weaknesses in their training initiatives. By collecting and analyzing data at various levels, organizations can pinpoint what aspects of the training are working well and where improvements are needed. These insights guide decision-making for refining content, training delivery methods, and overall training strategies.

3. Enhance training design

Through training evaluation models, organizations gain insights into how training content and materials are being received by participants. This information allows them to tailor training content to better match participants’ needs, ensuring that the training remains engaging, relevant, and aligned with the intended learning outcomes.

4. Improve ROI on training investments

Effective training evaluation models enable organizations to assess the return on investment (ROI) of their training initiatives. By measuring the impact of training on job performance, productivity, and organizational outcomes, organizations can make informed decisions about the allocation of resources and ensure that training efforts are delivering measurable value. Consider the 70-20-10 model as a learning framework to further increase training ROI.

5. Foster accountability

Training evaluation models encourage accountability at various levels. Participants are held accountable for their engagement and application of training content, while trainers and instructional designers are accountable for the effectiveness of their materials. Additionally, organizations can use evaluation results to hold stakeholders accountable for the success of training initiatives within the broader context of business goals.

Challenges In Implementing Training Evaluation Models

Implementing training evaluation models can present several challenges that organizations need to navigate effectively to ensure the success and value of their training initiatives.

1. Limited resources and time constraints

One of the primary challenges is the allocation of sufficient resources, including time, budget, and personnel, for the evaluation process. Organizations might find it difficult to dedicate resources to data collection, analysis, and reporting, especially when facing tight deadlines and competing priorities. Balancing the need for thorough evaluation with limited resources requires careful planning and prioritization.

2. Stakeholder buy-in

Gaining buy-in from stakeholders, including leadership, trainers, participants, and decision-makers, can be challenging. Some stakeholders may view evaluation as time-consuming or unnecessary, particularly if they don’t fully understand the value it brings. Effective communication about the benefits of evaluation and its role in improving training outcomes is crucial for overcoming this challenge.

3. Complexity of evaluation models

Many training evaluation models involve multiple levels of assessment, data collection methods, and analysis techniques. The complexity of these models can be overwhelming, especially for organizations new to the evaluation process. Striking a balance between a comprehensive evaluation approach and one that is manageable and aligned with organizational needs is a challenge that organizations must address.

Best Practices For Implementing Training Evaluation Models

Implementing training evaluation models requires careful planning and execution to ensure accurate and meaningful results. Here are some best practices to consider:

1. Align evaluation with training objectives

Ensure that your evaluation aligns with the specific goals and objectives of the training program. Each level of the evaluation should reflect the intended outcomes of the training, from knowledge acquisition to behavior change and organizational impact.

2. Involve stakeholders from the beginning

Engage key stakeholders, including trainers, participants, and organizational leaders, while designing the evaluation process. Their input ensures that the evaluation model captures relevant aspects of the training and addresses their expectations.

3. Develop reliable data collection methods

Design data collection methods that are both reliable and appropriate for each evaluation level. This includes pre- and post-training surveys, assessments, interviews, observations, and performance metrics. Ensuring data accuracy and consistency is crucial for meaningful analysis.

4. Set baseline measures

Before implementing the training, establish baseline measures to assess the initial state of participants’ knowledge, skills, and behaviors. This baseline serves as a reference point for evaluating the impact of the training and identifying improvements.

5. Encourage honest feedback

Create a safe and non-judgmental environment that encourages participants to provide honest feedback about the training. Anonymous surveys or focus groups enable participants to share their thoughts openly, providing valuable insights for improvement.

6. Communicate evaluation results

Share the evaluation results with stakeholders in a clear and transparent manner. Highlight successes and areas for improvement, and show how the data aligns with the training objectives. Effective communication builds trust and demonstrates the value of evaluation efforts.

7. Continuously improve the evaluation process

Treat training evaluation as an ongoing process of improvement. Regularly review the evaluation methods, incorporate feedback, and refine the process to enhance its effectiveness over time.

Training Clicks Better With Whatfix

Implementing a digital adoption platform such as Whatfix can significantly contribute to evaluating your training programs by providing insights into learners’ actual usage and proficiency with digital tools and processes.

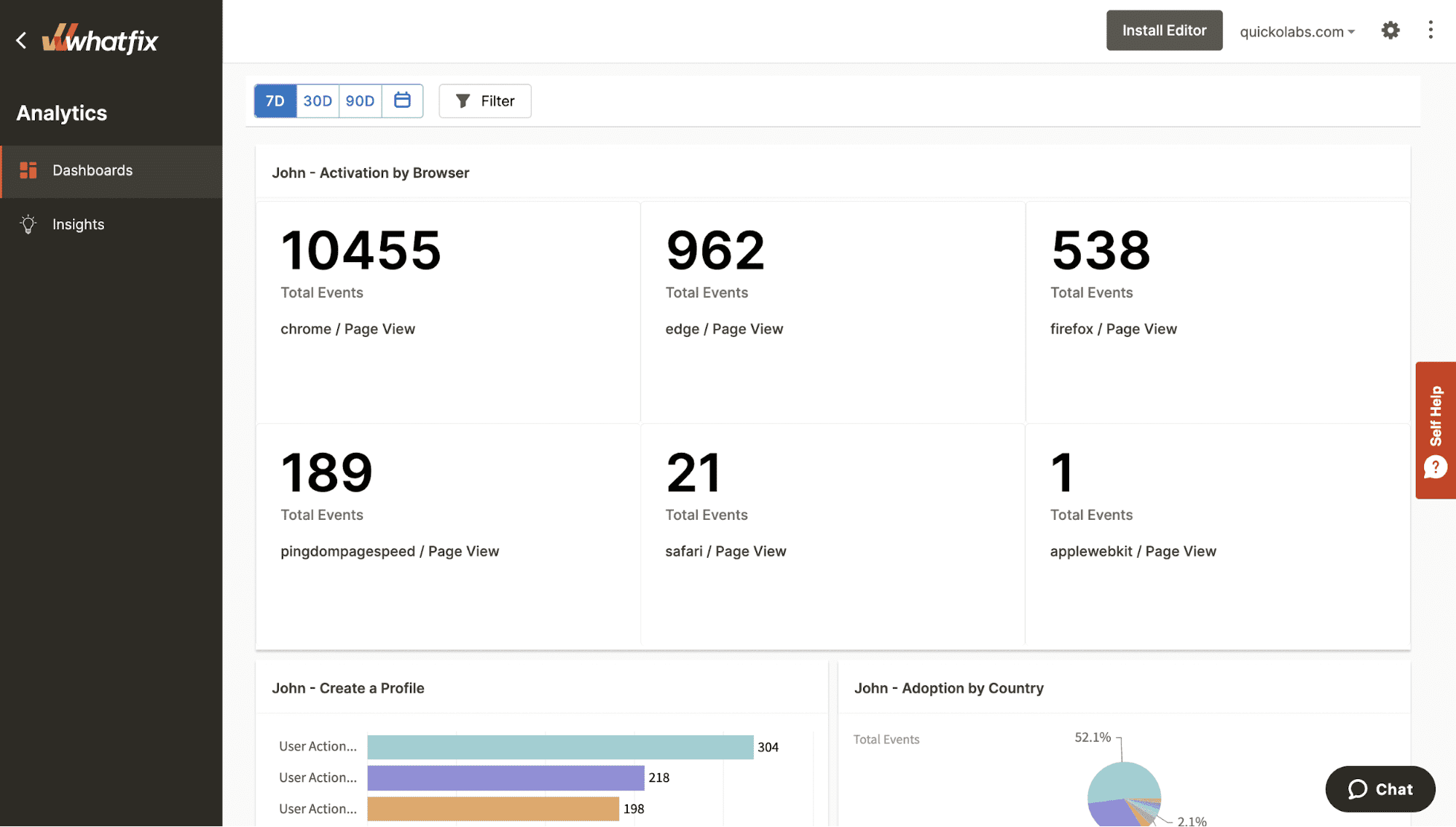

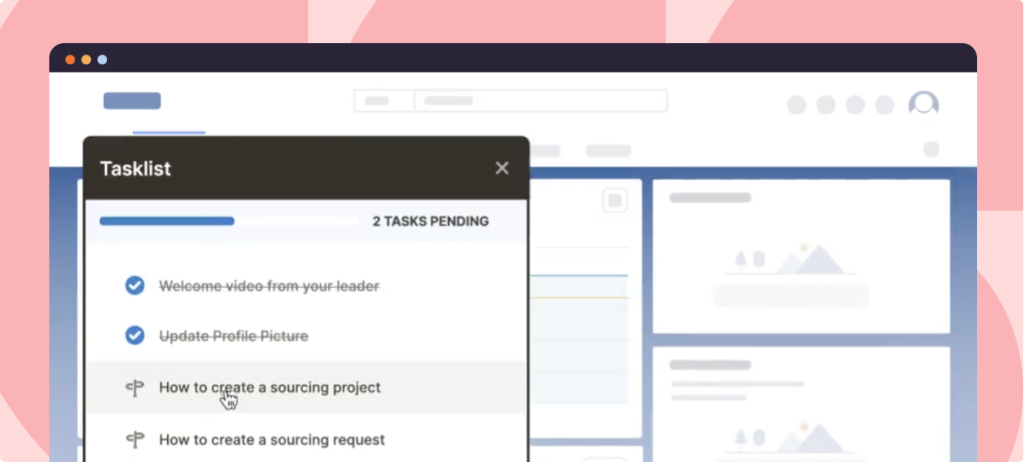

These platforms offer real-time analytics that tracks employees’ interactions with software applications and digital workflows, capturing data on their actions, completion rates, and task efficiency.

By analyzing this data, L&D professionals can evaluate learners’ adoption and application of the training content in real-world scenarios.

With the ability to monitor user engagement, performance, and feedback, DAPs offer a comprehensive view of training outcomes, enabling organizations to assess their training initiatives’ effectiveness, identify improvement areas, and make data-driven decisions to optimize the learning experience and maximize digital adoption success.